Object Detection and Image Segmentation with Deep Learning on Earth Observation Data: A Review-Part I: Evolution and Recent Trends

Abstract

:1. Introduction

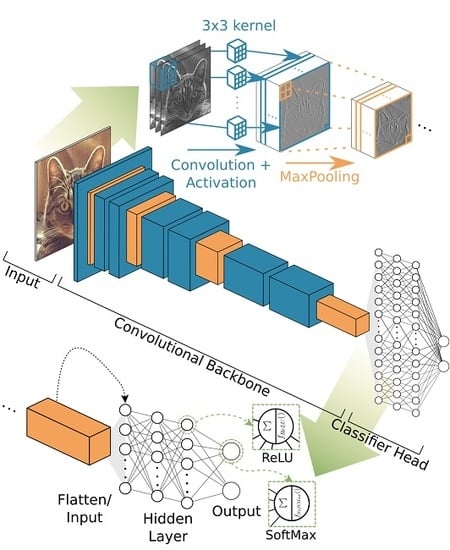

- The convolutional backbone is a strong feature extractor for a natural signal, while it maintains the fundamental structure of that signal and is sensitive for local connectivity.

- Instead of pairwise connections of neurons, kernel functions are used to connect layers, in order to learn features from training data.

- By sequentially repeating convolution, activation and pooling, the idea of how natural signals are composed, of low combined to high level features, the artificial architectures of CNNs for extracting features follows the hierarchical structure of a natural signal and mimic the behaviour of the visual cortex of living mammals [96,97,98,99].

- The modular composition of both the convolutional backbone itself and the overall architecture makes the CNN approach highly adaptable for a variety of tasks and optimisations.

- Image recognition is understood as the prediction of a class label for a whole image.

- Image segmentation, semantic segmentation or pixel wise classification segments the whole image into semantic meaningful classes, where the smallest segment can be a single pixel.

- Object detection predicts locations of objects as bounding boxes and a class label.

- Instance segmentation is an object detection task on which an image segmentation task for the specific bounding box and class is applied additionally. This results in a segmentation mask of the specific object predictions. In this review, instance segmentation is discussed together with object detection, due to their evolutionary closeness.

3. Evolution of CNN Architectures in Computer Vision

3.1. Image Recognition and Convolutional Backbones

3.1.1. Vintage Architectures

- The convolutional backbone consists of repeated convolutions to increase the feature depth and some kind of resizing method such as pooling with stride 2 to decrease resolution.

- In VGG-19, the repeated building blocks with stacked convolutions of constant size enlarge the receptive field and deepen the network.

3.1.2. Inception Family

- Bottleneck designs and complex building block structures

- Batch normalisation to make deep networks trainable faster via stochastic gradient descent

- Factorisation of convolutions in space and depth

3.1.3. ResNet Family

3.1.4. Efficient Designs

3.2. Image Segmentation

3.2.1. Naïve Decoder

3.2.2. Encoder–Decoder Models

3.3. Object Detection

3.3.1. Two-Stage Detectors

3.3.2. One-Stage Detectors

4. Popular Deep Learning Frameworks and Earth Observation Datasets

4.1. Deep Learning Frameworks

4.2. Earth Observation Datasets

- The position of the sensor in Earth observation data has mostly an overhead perspective relative to the scene, whereas a natural image is captured from a side looking perspective, hence the same object classes appear differently.

- Data intensively used in computer vision are often three channel RGB images, whereas Earth observation data often consist of a multichannel image stack with more than three channels, which has to be considered, especially when transferring models from computer vision to Earth observation applications.

- Computer vision model input data are often from the same sensor and platform, whereas in Earth observation both can change and data fusion has to be incorporated into the model.

- Objects which appear in overhead images do not have a general orientation. That means that objects of the same class commonly appear at 360° rotation, which has to be considered in training data, architecture or both. Whereas in natural images bottom and top of the image and therewith also of the pictured objects are often defined more specifically which results in a general orientation of objects which can be expected for natural images.

- In natural images, objects of interest tend to be in the centre of the image and in high resolution, whereas in Earth observation data the objects can lie off nadir or at boarders with coarse resolution.

5. Future Research

6. Conclusions

- A stack of fully connected artificial neurons uses the extracted features to predict the probability of the class.

- Such deep models need specific normalisation schemes such as batch normalisation to make supervised training of deep networks possible and faster [41].

- Residual connections further alleviates the training of increasingly deep architectures [43].

- The recent findings in neural architecture search (NAS) bring together complex network structures and efficient usage of parameters. With NAS, architectures are searched for by an artificial controller. This controller tries to maximise specific metrics of the networks it creates iteratively and therewith finds highly efficient architectures [48,52].

- So-called atrous convolutions [58], which maintain resolution while extracting features, are widely used to cope with the high resolution-feature depth trade-off.

- The combination of feature maps of different scales with context information from image level was found to contribute effectively to pixel wise classification [59].

- Two stage object detectors show both good performance and adaptability. The most popular detectors are the Faster R-CNN [76] design and its successors. In the first stage, they propose class agnostic regions of interest (RoIs) for objects. During the second stage those RoIs are classified and the bounding box is regressed to tight object boundaries.

- For multiscale processing, the feature pyramid network (FPN) [77] enhances the convolutional backbone by merging high semantic features with precise localisation information.

- Cascading classifiers and bounding box regression suppress noisy detections by iteratively refining RoIs [79].

- Building models with NAS might lead to overly optimised architectures for specific tasks and datasets. Therefore, it is questionable if such models which are recently successful in computer vision tasks perform equally well in Earth observation, as was the case with hand crafted designs. However, NAS can also be used to find Earth observation specific designs.

- The number of DL datasets for Earth observation applications is still small in relation to possible applications and sensor diversity. Since datasets are highly important to push the understanding of the interaction between DL models and specific types of data, an increase in datasets has huge potential for further advances for DL in Earth observation.

- Beside more datasets, weakly supervised learning provides encouraging results as an alternative to expensive dataset creation. It is especially important for proof of concepts studies and experimental research.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Bengio, Y. Deep Learning of Representations: Looking Forward. In Statistical Language and Speech Processing; Dediu, A.H., Martin-Vide, C., Mitkov, R., Truthe, B., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 1–37. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep Learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. In Advances in Neural Information Processing Systems; Pereira, F., Burges, C.J.C., Bottou, L., Weinberger, K.Q., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2012; Volume 25, pp. 1097–1105. [Google Scholar]

- Voulodimos, A.; Doulamis, N.; Doulamis, A.; Protopapadakis, E. Deep learning for computer vision: A brief review. Comput. Intell. Neurosci. 2018, 2018, 7068349. [Google Scholar] [CrossRef]

- Shrestha, A.; Mahmood, A. Review of Deep Learning Algorithms and Architectures. IEEE Access 2019, 7, 53040–53065. [Google Scholar] [CrossRef]

- Zhang, L.; Zhang, L.; Du, B. Deep Learning for Remote Sensing Data: A Technical Tutorial on the State of the Art. IEEE Geosci. Remote Sens. Mag. 2016, 4, 22–40. [Google Scholar] [CrossRef]

- Zhu, X.X.; Tuia, D.; Mou, L.; ** Dataset. ar**+Dataset&author=Shermeyer,+J.&author=Hogan,+D.&author=Brown,+J.&author=Etten,+A.V.&author=Weir,+N.&author=Pacifici,+F.&author=Haensch,+R.&author=Bastidas,+A.&author=Soenen,+S.&author=Bacastow,+T.&publication_year=2020&journal=ar**v" class='google-scholar' target='_blank' rel='noopener noreferrer'>Google Scholar]

- Ding, L.; Tang, H.; Bruzzone, L. Improving Semantic Segmentation of Aerial Images Using Patch-based Attention. ar**v 2019, ar**v:1911.08877. [Google Scholar]

- Zhang, G.; Lei, T.; Cui, Y.; Jiang, P. A Dual-Path and Lightweight Convolutional Neural Network for High-Resolution Aerial Image Segmentation. ISPRS Int. J. Geo-Inf. 2019, 8, 582. [Google Scholar] [CrossRef] [Green Version]

- Demir, I.; Koperski, K.; Lindenbaum, D.; Pang, G.; Huang, J.; Basu, S.; Hughes, F.; Tuia, D.; Raskar, R. DeepGlobe 2018: A Challenge to Parse the Earth Through Satellite Images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, Salt Lake City, UT, USA, 18–23 June 2018; pp. 172–17209. [Google Scholar]

- Zhou, L.; Zhang, C.; Wu, M. D-LinkNet: LinkNet with Pretrained Encoder and Dilated Convolution for High Resolution Satellite Imagery Road Extraction. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 192–1924. [Google Scholar]

- Buslaev, A.; Seferbekov, S.S.; Iglovikov, V.; Shvets, A. Fully Convolutional Network for Automatic Road Extraction From Satellite Imagery. In Proceedings of the CVPR Workshops, Salt Lake City, UT, USA, 18–23 June 2018; pp. 207–210. [Google Scholar]

- Hamaguchi, R.; Hikosaka, S. Building Detection from Satellite Imagery using Ensemble of Size-Specific Detectors. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 223–2234. [Google Scholar]

- Iglovikov, V.; Seferbekov, S.; Buslaev, A.; Shvets, A. TernausNetV2: Fully Convolutional Network for Instance Segmentation. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 228–2284. [Google Scholar]

- Tian, C.; Li, C.; Shi, J. Dense Fusion Classmate Network for Land Cover Classification. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 262–2624. [Google Scholar]

- Kuo, T.; Tseng, K.; Yan, J.; Liu, Y.; Wang, Y.F. Deep Aggregation Net for Land Cover Classification. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 247–2474. [Google Scholar]

- Ji, S.; Wei, S.; Lu, M. Fully Convolutional Networks for Multisource Building Extraction from an Open Aerial and Satellite Imagery Data Set. IEEE Trans. Geosci. Remote Sens. 2019, 57, 574–586. [Google Scholar] [CrossRef]

- Ji, S.; Wei, S.; Lu, M. A scale robust convolutional neural network for automatic building extraction from aerial and satellite imagery. Int. J. Remote Sens. 2019, 40, 3308–3322. [Google Scholar] [CrossRef]

- Maggiori, E.; Tarabalka, Y.; Charpiat, G.; Alliez, P. Can Semantic Labeling Methods Generalize to Any City? The Inria Aerial Image Labeling Benchmark. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Fort Worth, TX, USA, 23–28 July 2017; pp. 3226–3229. [Google Scholar]

- Audebert, N.; Boulch, A.; Le Saux, B.; Lefèvre, S. Distance transform regression for spatially-aware deep semantic segmentation. Comput. Vision Image Underst. 2019, 189, 102809. [Google Scholar] [CrossRef] [Green Version]

- Duke Applied Machine Learning Lab. DukeAMLL Repository of Winning INRIA Building Labeling. Available online: https://github.com/dukeamll/inria_building_labeling_2017 (accessed on 1 April 2020).

- Azimi, S.M.; Henry, C.; Sommer, L.; Schumann, A.; Vig, E. SkyScapes Fine-Grained Semantic Understanding of Aerial Scenes. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27–28 October 2019; pp. 7393–7403. [Google Scholar]

- Cheng, G.; Han, J.; Zhou, P.; Guo, L. Multi-class geospatial object detection and geographic image classification based on collection of part detectors. ISPRS J. Photogramm. Remote Sens. 2014, 98, 119–132. [Google Scholar] [CrossRef]

- Zhang, H.; Wu, J.; Liu, Y.; Yu, J. VaryBlock: A Novel Approach for Object Detection in Remote Sensed Images. Sensors 2019, 19, 5284. [Google Scholar] [CrossRef] [Green Version]

- Tayara, H.; Chong, K.T. Object Detection in Very High-Resolution Aerial Images Using One-Stage Densely Connected Feature Pyramid Network. Sensors 2018, 18, 3341. [Google Scholar] [CrossRef] [Green Version]

- Mundhenk, T.N.; Konjevod, G.; Sakla, W.A.; Boakye, K. A Large Contextual Dataset for Classification, Detection and Counting of Cars with Deep Learning. In Computer Vision–ECCV 2016; Leibe, B., Matas, J., Sebe, N., Welling, M., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 785–800. [Google Scholar]

- Koga, Y.; Miyazaki, H.; Shibasaki, R. A Method for Vehicle Detection in High-Resolution Satellite Images that Uses a Region-Based Object Detector and Unsupervised Domain Adaptation. Remote Sens. 2020, 12, 575. [Google Scholar] [CrossRef] [Green Version]

- Hsieh, M.; Lin, Y.; Hsu, W.H. Drone-Based Object Counting by Spatially Regularized Regional Proposal Network. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 4165–4173. [Google Scholar]

- Liu, K.; Mattyus, G. Fast Multiclass Vehicle Detection on Aerial Images. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1938–1942. [Google Scholar]

- Azimi, S.M. ShuffleDet: Real-Time Vehicle Detection Network in On-Board Embedded UAV Imagery. In Computer Vision–ECCV 2018 Workshops; Leal-Taixé, L., Roth, S., Eds.; Springer International Publishing: Cham, Switzerland, 2019; pp. 88–99. [Google Scholar]

- Zhou, Z.H. A brief introduction to weakly supervised learning. Natl. Sci. Rev. 2017, 5, 44–53. [Google Scholar] [CrossRef] [Green Version]

- Shi, Z.; Yang, Y.; Hospedales, T.M.; **ang, T. Weakly-Supervised Image Annotation and Segmentation with Objects and Attributes. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2525–2538. [Google Scholar] [CrossRef] [Green Version]

- Diba, A.; Sharma, V.; Pazandeh, A.; Pirsiavash, H.; Van Gool, L. Weakly Supervised Cascaded Convolutional Networks. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 5131–5139. [Google Scholar]

| Abbreviation | Reference | Explanation |

|---|---|---|

| DL | [2,25] | Deep Learning |

| ANN | [25] | Artificial Neural Network |

| SGD | [26,27,28,29] | Stochastic Gradient Descent |

| BP | [25,26,30] | Backpropagation |

| ReLU | [31,32,33] | Rectified Linear Unit |

| CNN | [2] | Convolutional Neural Network |

| RNN | [34] | Recurrent Neural Network |

| LSTM | [35] | Long Short Term Memory |

| GAN | [36] | Generative Adversarial Network |

| IR | Image Recognition | |

| ImageNet | [37] | DL dataset |

| ILSVRC | [3] | ImageNet Large Scale Vision Recognition Challenge |

| AlexNet | [3] | CNN by Alex Krizhevsky et al. [3] |

| ZFNet | [38] | CNN by Zeiler and Fergus [38] |

| VGG-16/19 | [39] | CNN by members of the Visual Geometry Group |

| LRN | [3,38] | Local Response Normalisation |

| Inception V1-3 | [40,41,42] | CNN architectures with Inception modules |

| BN | [41] | Batch Normalisation |

| ResNet | [43] | CNN architecture with residual connections |

| ResNeXt | [44] | Advanced CNN architecture with residual connections |

| Xception | [45] | ResNet-Inception combined CNN architecture |

| DenseNet | [46] | Very deep, ResNet based CNN |

| SENet | [47] | Squeeze and Excitation Network |

| NAS | [48] | Neural Architecture Search |

| NASNet | [49] | CNN architecture drafted with NAS |

| MobileNet | [50] | Efficient CNN architecture |

| MnasNet | [51] | Efficient CNN architecture drafted with NAS |

| EfficientNet | [52] | Efficient CNN architecture drafted with NAS |

| IS | Image Segmentation | |

| PASCAL-VOC | [53,54] | Pattern Analysis, Statistical modeling and Computational Learning— Visual Object Classes dataset |

| FCN | [55] | Fully Convolutional Network |

| DeepLabV1-V3+ | [56,57,58,59,60] | CNN architectures for IS |

| CRF | [61] | Conditional Random Field |

| ASPP | [58,59] | Atrous Spatial Pyramid Pooling |

| DPC | [62] | Dense Prediction Cell, CNN module drafted with NAS |

| AutoDeepLab | [63] | CNN architecture drafted with NAS |

| DeconvNet | [64] | Deconvolutional Network CNN |

| ParseNet | [65] | Parsing image context CNN |

| PSPNet | [66] | Pyramid Scene Parsing Network |

| U-Net | [67] | U-shaped encoder–decoder CNN |

| Tiramisu | [68] | U-Net-DenseNet combined CNN |

| RefineNet | [69] | Encoder–decoder CNN |

| HRNetV1-2 | [70,71] | High Resolution Networks CNNs for IS |

| OD | Object Detection | |

| MS-COCO | [72] | Microsoft-Common Object in Context dataset |

| R-CNN | [73] | Region based CNN |

| SPPNet | [74] | Spatial Pyramid Pooling Network |

| RoI pooling | [75] | Discretised pooling of Regions of Interest |

| Fast R-CNN | [75] | R-CNN + RoI pooling based CNN |

| RPN | [76] | Region (RoI) Proposal Network |

| Faster R-CNN | [76] | R-CNN + RPN + RoI pooling based CNN |

| FPN | [77] | Feature Pyramid Network |

| IoU | [53,78] | Intersection over Union (metric) |

| Cascade R-CNN | [79] | Faster R-CNN based cascading detector for less noisy detections |

| RoI align | [80] | Floating point variant of RoI pooling for higher accuracy |

| Mask R-CNN | [80] | Faster R-CNN + FCN based instance segmentation |

| CBNet | [81] | Composite Backbone Network for R-CNN based networks |

| PANet | [82] | Path Aggregation Network |

| SNIP(ER) | [83,84] | Scale Normalisation for Image Pyramids (with Efficient Resampling) |

| TridentNet | [85] | CNN using atrous convolution |

| fps | frames per second (metric) | |

| YOLO-V1-3 | [86,87,88] | You Only Look Once |

| NMS | Non-Maximum Suppression | |

| DarkNet | [87,88] | CNN backbone for YOLO-V2+3 |

| SSD | [89] | Single Shot MultiBox Detector |

| RetinaNet | [90] | CNN using an adaptive loss function for OD |

| RefineDet | [91] | CNN performing anchor refinement before detection |

| NAS-FPN | [92] | FPN variant drafted with NAS |

| EfficientDet | [93] | Efficient CNN for OD based on EfficientNet |

| BiFPN | [93] | Bi-directional FPN |

| Architecture | Year | Bottleneck | Factorisation | Residual | NAS | M Parameters | [%] |

|---|---|---|---|---|---|---|---|

| AlexNet [3] | 2012 | 62 | 81.8 | ||||

| ZFNet [38] | 2013 | 62 | 83.5 | ||||

| VGG-19 [39] | 2014 | 144 | 91.9 | ||||

| Inception-V1 + BN [41] | 2015 | ✓ | 11 | 92.2 | |||

| ResNet-152 [43] | 2015 | ✓ | ✓ | 60 | 95.5 | ||

| Inception-V3 [42] | 2015 | ✓ | ✓ | 24 | 94.4 | ||

| DenseNet-264 [46] | 2016 | ✓ | ✓ | 34 | 93.9 | ||

| Xception [45] | 2016 | ✓ | ✓ | ✓ | 23 | 94.5 | |

| ResNeXt-101 [44] | 2016 | ✓ | ✓ | 84 | 95.6 | ||

| MobileNet-224 [50] | 2017 | ✓ | ✓ | ✓ | 4.2 | 89.9 | |

| NasNet [49] | 2017 | ✓ | ✓ | ✓ | ✓ | 89 | 96.2 |

| MobileNet V2 [108] | 2018 | ✓ | ✓ | ✓ | 6.1 | 92.5 | |

| MnasNet [51] | 2018 | ✓ | ✓ | ✓ | ✓ | 5.2 | 93.3 |

| EfficientNet-B7 [52] | 2019 | ✓ | ✓ | ✓ | ✓ | 66 | 97.1 |

| Architecture | Year | Backbone | Type | CRF | Atrous | Multiscale | NAS | mIoU [%] |

|---|---|---|---|---|---|---|---|---|

| FCN-8s [55] | 2014 | VGG-16 | encoder–decoder | 62.2 | ||||

| DeepLabV1 [56] | 2014 | VGG-16 | naïve decoder | ✓ | ✓ | 66.4 | ||

| DeconvNet [64] | 2015 | VGG-16 | encoder–decoder | 69.6 | ||||

| U-Net [67] | 2015 | Own | encoder–decoder | 72.7 | ||||

| ParseNet [65] | 2015 | VGG-16 | naïve decoder | ✓ | 69.8 | |||

| DeepLabV2 [58] | 2016 | ResNet-101 | naïve decoder | ✓ | ✓ | ✓ | 79.7 | |

| RefineNet [69] | 2016 | ResNet-152 | encoder–decoder | 83.4 | ||||

| PSPNet [66] | 2016 | ResNet-101 | naïve decoder | ✓ | 82.6 | |||

| DeepLabV3 [59] | 2017 | ResNet-101 | naïve decoder | ✓ | ✓ | 85.7 | ||

| DeepLabV3+ [60] | 2018 | Xception | encoder–decoder | ✓ | ✓ | 87.8 | ||

| DensePredictionCell [62] | 2018 | Xception | naïve decoder | ✓ | ✓ | ✓ | 87.9 | |

| Auto-DeepLab [63] | 2019 | Own | naïve decoder | ✓ | ✓ | ✓ | 82.1 |

| Two-Stage Detector | ||||||

| Architecture | Year | Backbone | RPN | RoI | Multiscale Feature | AP [%] |

| R-CNN [73] | 2013 | AlexNet | - | |||

| Fast R-CNN [75] | 2015 | VGG-16 | pooling | 19.7 | ||

| Faster R-CNN [76] | 2015 | VGG-16 | ✓ | pooling | 27.2 | |

| Faster R-CNN + FPN [77] | 2016 | ResNet-101 | ✓ | pooling | FPN | 35.8 |

| Mask R-CNN [80] | 2017 | ResNeXt-101 | ✓ | align | FPN | 39.8 |

| Cascade R-CNN [79] | 2017 | ResNet-101 | ✓ | align | FPN | 50.2 |

| PANet [82] | 2018 | ResNeXt-101 | ✓ | align | FPN | 45 |

| TridentNet [85] | 2019 | ResNet-101-Deformable | ✓ | pooling | 3xAtrous | 48.4 |

| Cascade Mask R-RCNN [81] | 2019 | CBNet (3xResNeXt-152) | ✓ | align | FPN | 53.3 |

| One-Stage Detector | ||||||

| Architecture | Year | Backbone | Anchors | NAS | Multiscale Feature | AP [%] |

| YOLO-V1 [86] | 2015 | custom | - | |||

| SSD [89] | 2015 | VGG-16 | ✓ | ✓ | 26.8 | |

| YOLO-V2 [87] | 2016 | DarkNet-19 | ✓ | 21.6 | ||

| RetinaNet [90] | 2017 | ResNet-101 | ✓ | FPN | 39.1 | |

| YOLO-V3 [88] | 2018 | DarkNet-53 | ✓ | FPN-like | 33 | |

| EfficientDet-D7 [93] | 2019 | EfficientNet-B6 | ✓ | ✓ | BiFPN | 52.2 |

| Dataset | Task | Topic | Platform | Sensor | Resolution | Example Application by Architectures |

|---|---|---|---|---|---|---|

| NWPU RESISC45 [155] | IR | LULC | multiple platforms | optical | high | VGG-16 [155] |

| EuroSAT [156] | IR | LULC | Sentinel 2 | multispectral | medium | Inception-V1 and ResNet-50 [156] |

| BigEarthNet [116,117] | IR | LULC | Sentinel 2 | multispectral | medium | ResNet-50 [117] |

| So2Sat LCZ42 [157] | IR | local climate zones | Sentinel 1+2 | mltspectr+SAR | medium | ResNeXt-29 + CBAM [157] |

| SpaceNet1 [158] | IS | building footprints | - | multispectral | low | VGG-16 + MNC [158,159] |

| SpaceNet2 [160] | IS | building footprints | WorldView3 | multispectral | high | U-Net (modified: inputdepth = 13) [160] |

| SpaceNet3 [161] | IS | road network | WorldView3 | multispectral | high | ResNet-34 + U-Net [161] |

| SpaceNet4 [162] | IS | building footprints | WorldView2 | multispectral | high | SE-ResNeXt-50/101 + U-Net [162] |

| SpaceNet5 [163] | IS | road network | WorldView3 | multispectral | high | ResNet-50 + U-Net [164], SE-ResNeXt-50 + U-Net [163] |

| SpaceNet6 [165,166] | IS | building footprints | WordView2 + Capella36 | mltspectr + SAR | high | VGG-16 + U-Net [166] |

| ISPRS 2D Sem. Lab. [126] | IS | multiple classes | plane | multispectral | very high | U-Net, DeepLabV3+, PSPNet, LANet (patch attention module) [167], MobileNetV2(with atrous conv) + Dual path encoder + SE modules [168] |

| DeepGlobe-Road [169] | IS | road network | WorldView3 | multispectral | high | D-LinkNet (ResNet-34 + U-Net with atrous decoder) [170], ResNet-34 + U-Net [171] |

| DeepGlobe-Building [169] | IS | building footprints | WorldView3 | multispectral | high | ResNet-18 + Multitask U-Net [172], WideResNet-38 + U-Net [173] |

| DeepGlobe-LCC [169] | IS | LULC | WorldView3 | multispectral | high | Dense Fusion Classmate Network (DenseNet + FCN varaint) [174], Deep Aggregation Net (ResNet + DeepLabV3 + variant) [175] |

| WHU Building [176] | IS | building footprints | multiple platforms | optical | high | VGG-16 + ASPP + FCN [177] |

| INRIA [178] | IS | building footprints | multiple platforms | multispectral | very high | ResNet-50 + SegNet variant [179], U-Net variant [180] |

| DLR-SkyScapes [181] | IS | multiple classes | helicopter | optical | very high | SkyScapesNet (custom design [181]) |

| NWPU VHR-10 [182] | OD | multiple classes | airborne platforms | optical | very high | DarkNet + YOLO (modified: VaryBlock) [183], ResNet-101 + FPN (modified: Densely connected top-down path) + fully convolutional detector head [184] |

| COWC [185] | OD | vehicle detection | airborne platforms | optical | very high | VGG16 + SSD + correlation alignment domain adaptation [186] |

| CARPK [187] | OD | vehicle detection | drone | optical | very high | VGG16 + LPN (Layout Proposal Net) [187] |

| DLR 3K Munich [188] | OD | vehicle detection | airborne platform | optical | very high | ShuffleDet (ShuffleNet + modified SSD) [189] |

| DOTA [100] | OD | multiple classes | airborne platforms | optical | very high to high | ResNet-50+improved Cascade R2CNN see leader board of [100], ResNet-101/FPN + Fater R-CNN OBB + RoI transformer [138] |

| DIOR [24] | OD | multiple classes | multiple platforms | optical | heigh to medium | ResNet-101 + PAnet and ResNet-101 + RetinaNet [24] |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hoeser, T.; Kuenzer, C. Object Detection and Image Segmentation with Deep Learning on Earth Observation Data: A Review-Part I: Evolution and Recent Trends. Remote Sens. 2020, 12, 1667. https://doi.org/10.3390/rs12101667

Hoeser T, Kuenzer C. Object Detection and Image Segmentation with Deep Learning on Earth Observation Data: A Review-Part I: Evolution and Recent Trends. Remote Sensing. 2020; 12(10):1667. https://doi.org/10.3390/rs12101667

Chicago/Turabian StyleHoeser, Thorsten, and Claudia Kuenzer. 2020. "Object Detection and Image Segmentation with Deep Learning on Earth Observation Data: A Review-Part I: Evolution and Recent Trends" Remote Sensing 12, no. 10: 1667. https://doi.org/10.3390/rs12101667