-

The Use of Immersive Technologies in Karate Training: A Sco** Review

The Use of Immersive Technologies in Karate Training: A Sco** Review -

A Comparison of Parenting Strategies in a Digital Environment: A Systematic Literature Review

A Comparison of Parenting Strategies in a Digital Environment: A Systematic Literature Review -

How to Design Human-Vehicle Cooperation for Automated Driving: A Review of Use Cases, Concepts, and Interfaces

How to Design Human-Vehicle Cooperation for Automated Driving: A Review of Use Cases, Concepts, and Interfaces -

The FlexiBoard: Tangible and Tactile Graphics for People with Vision Impairments

The FlexiBoard: Tangible and Tactile Graphics for People with Vision Impairments -

Do Not Freak Me Out! The Impact of Lip Movement and Appearance on Knowledge Gain and Confidence

Do Not Freak Me Out! The Impact of Lip Movement and Appearance on Knowledge Gain and Confidence

Journal Description

Multimodal Technologies and Interaction

Multimodal Technologies and Interaction

is an international, peer-reviewed, open access journal on multimodal technologies and interaction published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Inspec, dblp Computer Science Bibliography, and other databases.

- Journal Rank: JCR - Q2 (Computer Science, Cybernetics) / CiteScore - Q2 (Neuroscience (miscellaneous))

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 14.5 days after submission; acceptance to publication is undertaken in 4.9 days (median values for papers published in this journal in the first half of 2024).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.4 (2023)

Latest Articles

Data Governance in Multimodal Behavioral Research

Multimodal Technol. Interact. 2024, 8(7), 55; https://doi.org/10.3390/mti8070055 - 25 Jun 2024

Abstract

►

Show Figures

In the digital era, multimodal behavioral research has emerged as a pivotal discipline, integrating diverse data sources to comprehensively understand human behavior. This paper defines and distinguishes data governance from mere data management within this context, highlighting its centrality in assuring data quality,

[...] Read more.

In the digital era, multimodal behavioral research has emerged as a pivotal discipline, integrating diverse data sources to comprehensively understand human behavior. This paper defines and distinguishes data governance from mere data management within this context, highlighting its centrality in assuring data quality, ethical handling, and participant protection. Through a meticulous review of the literature and empirical experience, we identify key implementation strategies and elucidate the benefits and risks of data governance frameworks in multimodal research. A demonstrative case study illustrates the practical applications and challenges, revealing enhanced data reliability and research integrity as tangible outcomes. Our findings underscore the critical need for robust data governance, pointing to future advancements in the field, including the development of adaptive governance frameworks, innovative big data analytics solutions, and user-friendly tools. These enhancements are poised to amplify the utility of multimodal data, propelling behavioral science forward.

Full article

Open AccessArticle

Emotion-Aware In-Car Feedback: A Comparative Study

by

Kevin Fred Mwaita, Rahul Bhaumik, Aftab Ahmed, Adwait Sharma, Antonella De Angeli and Michael Haller

Multimodal Technol. Interact. 2024, 8(7), 54; https://doi.org/10.3390/mti8070054 - 25 Jun 2024

Abstract

We investigate personalised feedback mechanisms to help drivers regulate their emotions, aiming to improve road safety. We systematically evaluate driver-preferred feedback modalities and their impact on emotional states. Using unobtrusive vision-based emotion detection and self-labeling, we captured the emotional states and feedback preferences

[...] Read more.

We investigate personalised feedback mechanisms to help drivers regulate their emotions, aiming to improve road safety. We systematically evaluate driver-preferred feedback modalities and their impact on emotional states. Using unobtrusive vision-based emotion detection and self-labeling, we captured the emotional states and feedback preferences of 21 participants in a simulated driving environment. Results show that in-car feedback systems effectively influence drivers’ emotional states, with participants reporting positive experiences and varying preferences based on their emotions. We also developed a machine learning classification system using facial marker data to demonstrate the feasibility of our approach for classifying emotional states. Our contributions include design guidelines for tailored feedback systems, a systematic analysis of user reactions across three feedback channels with variations, an emotion classification system, and a dataset with labeled face landmark annotations for future research.

Full article

Open AccessArticle

Autoethnography of Living with a Sleep Robot

by

Bijetri Biswas, Erin Dooley, Elizabeth Coulthard and Anne Roudaut

Multimodal Technol. Interact. 2024, 8(6), 53; https://doi.org/10.3390/mti8060053 - 18 Jun 2024

Abstract

Soft robotics is used in real-world clinical situations, including surgery, rehabilitation, and diagnosis. However, several challenges remain to make soft robots more viable, especially for clinical interventions such as improving sleep quality, which impacts physiological and mental health. This paper presents an autoethnographic

[...] Read more.

Soft robotics is used in real-world clinical situations, including surgery, rehabilitation, and diagnosis. However, several challenges remain to make soft robots more viable, especially for clinical interventions such as improving sleep quality, which impacts physiological and mental health. This paper presents an autoethnographic account of the experience of slee** with a companion robot (Somnox), which mimics breathing to promote better sleep. The study is motivated by the key author’s experience with insomnia and a desire to better understand how Somnox is used in different social contexts. Data were collected through diary entries for 16 weeks (8 weeks without, 8 weeks with) and analysed thematically. The findings indicate improved sleep and observations about the relationship developed with the companion robot, including emotional connection and empathy for the technology. Furthermore, Somnox is a multidimensional family companion robot that can ease stomach discomfort and stress, reduce anxiety, and provide holistic care.

Full article

(This article belongs to the Special Issue Challenges in Human-Centered Robotics)

►▼

Show Figures

Figure 1

Open AccessSystematic Review

Mobile AR Interaction Design Patterns for Storytelling in Cultural Heritage: A Systematic Review

by

Andreas Nikolarakis and Panayiotis Koutsabasis

Multimodal Technol. Interact. 2024, 8(6), 52; https://doi.org/10.3390/mti8060052 - 17 Jun 2024

Abstract

►▼

Show Figures

The recent advancements in mobile technologies have enabled the widespread adoption of augmented reality (AR) to enrich cultural heritage (CH) digital experiences. Mobile AR leverages visual recognition capabilities and sensor data to superimpose digital elements into the user’s view of their surroundings. The

[...] Read more.

The recent advancements in mobile technologies have enabled the widespread adoption of augmented reality (AR) to enrich cultural heritage (CH) digital experiences. Mobile AR leverages visual recognition capabilities and sensor data to superimpose digital elements into the user’s view of their surroundings. The pervasive nature of AR serves several purposes in CH: visitor guidance, 3D reconstruction, educational experiences, and mobile location-based games. While most literature reviews on AR in CH focus on technological aspects such as tracking algorithms and software frameworks, there has been little exploration of the expressive affordances of AR for the delivery of meaningful interactions. This paper (based on the PRISMA guidelines) considers 64 selected publications, published from 2016 to 2023, that present mobile AR applications in CH, with the aim of identifying and analyzing the (mobile) AR (interaction) design patterns that have so far been discussed sporadically in the literature. We identify sixteen (16) main UX design patterns, as well as eight (8) patterns with a single occurrence in the paper corpus, that have been employed—sometimes in combination—to address recurring design problems or contexts, e.g., user navigation, representing the past, uncovering hidden elements, etc. We analyze each AR design pattern by providing a title, a working definition, principal use cases, and abstract illustrations that indicate the main concept and its workings (where applicable) and explanation with respect to examples from the paper corpus. We discuss the AR design patterns in terms of a few broader design and development concerns, including the AR recognition approach, content production and development requirements, and affordances for storytelling, as well as possible contexts and experiences, including indoor/outdoor settings, location-based experiences, mobile guides, and mobile games. We envisage that this work will thoroughly inform AR designers and developers abot the current state of the art and the possibilities and affordances of mobile AR design patterns with respect to particular CH contexts.

Full article

Figure 1

Open AccessArticle

LightSub: Unobtrusive Subtitles with Reduced Information and Decreased Eye Movement

by

Yuki Nishi, Yugo Nakamura, Shogo Fukushima and Yutaka Arakawa

Multimodal Technol. Interact. 2024, 8(6), 51; https://doi.org/10.3390/mti8060051 - 14 Jun 2024

Abstract

►▼

Show Figures

Subtitles play a crucial role in facilitating the understanding of visual content when watching films and television programs. In this study, we propose a method for presenting subtitles in a way that considers cognitive load when viewing video content in a non-native language.

[...] Read more.

Subtitles play a crucial role in facilitating the understanding of visual content when watching films and television programs. In this study, we propose a method for presenting subtitles in a way that considers cognitive load when viewing video content in a non-native language. Subtitles are generally displayed at the bottom of the screen, which causes frequent eye focus switching between subtitles and video, increasing the cognitive load. In our proposed method, we focused on the position, display time, and amount of information contained in the subtitles to reduce the cognitive load and to avoid disturbing the viewer’s concentration. We conducted two experiments to investigate the effects of our proposed subtitle method on gaze distribution, comprehension, and cognitive load during English-language video viewing. Twelve non-native English-speaking subjects participated in the first experiment. The results show that participants’ gazes were more focused around the center of the screen when using our proposed subtitles compared to regular subtitles. Comprehension levels recorded using LightSub were similar, but slightly inferior to those recorded using regular subtitles. However, it was confirmed that most of the participants were viewing the video with a higher cognitive load using the proposed subtitle method. In the second experiment, we investigated subtitles considering connected speech form in English with 18 non-native English speakers. The results revealed that the proposed method, considering connected speech form, demonstrated an improvement in cognitive load during video viewing but it remained higher than that of regular subtitles.

Full article

Figure 1

Open AccessSystematic Review

Active Learning Strategies in Computer Science Education: A Systematic Review

by

Diana-Margarita Córdova-Esparza, Julio-Alejandro Romero-González, Karen-Edith Córdova-Esparza, Juan Terven and Rocio-Edith López-Martínez

Multimodal Technol. Interact. 2024, 8(6), 50; https://doi.org/10.3390/mti8060050 - 13 Jun 2024

Abstract

►▼

Show Figures

The main purpose of this study is to examine the implementation of active methodologies in the teaching–learning process in computer science. To achieve this objective, a systematic review using the PRISMA method was performed; the search for articles was conducted through the Scopus

[...] Read more.

The main purpose of this study is to examine the implementation of active methodologies in the teaching–learning process in computer science. To achieve this objective, a systematic review using the PRISMA method was performed; the search for articles was conducted through the Scopus and Web of Science databases and the scientific search engine Google Scholar. By establishing inclusion and exclusion criteria, 15 research papers were selected addressing the use of various active methodologies which have had a positive impact on students’ learning processes. Among the principal active methodologies highlighted are problem-based learning, flipped classrooms, and gamification. The results of the review show how active methodologies promote significant learning, in addition to fostering more outstanding commitment, participation, and motivation on the students’ part. It was observed that active methodologies contribute to the development of fundamental cognitive and socio-emotional skills for their professional growth.

Full article

Figure 1

Open AccessArticle

OnMapGaze and GraphGazeD: A Gaze Dataset and a Graph-Based Metric for Modeling Visual Perception Differences in Cartographic Backgrounds Used in Online Map Services

by

Dimitrios Liaskos and Vassilios Krassanakis

Multimodal Technol. Interact. 2024, 8(6), 49; https://doi.org/10.3390/mti8060049 - 13 Jun 2024

Abstract

►▼

Show Figures

In the present study, a new eye-tracking dataset (OnMapGaze) and a graph-based metric (GraphGazeD) for modeling visual perception differences are introduced. The dataset includes both experimental and analyzed gaze data collected during the observation of different cartographic backgrounds used in five online map

[...] Read more.

In the present study, a new eye-tracking dataset (OnMapGaze) and a graph-based metric (GraphGazeD) for modeling visual perception differences are introduced. The dataset includes both experimental and analyzed gaze data collected during the observation of different cartographic backgrounds used in five online map services, including Google Maps, Wikimedia, Bing Maps, ESRI, and OSM, at three different zoom levels (12z, 14z, and 16z). The computation of the new metric is based on the utilization of aggregated gaze behavior data. Our dataset aims to serve as an objective ground truth for feeding artificial intelligence (AI) algorithms and develo** computational models for predicting visual behavior during map reading. Both the OnMapGaze dataset and the source code for computing the GraphGazeD metric are freely distributed to the scientific community.

Full article

Figure 1

Open AccessArticle

Metaverse & Human Digital Twin: Digital Identity, Biometrics, and Privacy in the Future Virtual Worlds

by

Pietro Ruiu, Michele Nitti, Virginia Pilloni, Marinella Cadoni, Enrico Grosso and Mauro Fadda

Multimodal Technol. Interact. 2024, 8(6), 48; https://doi.org/10.3390/mti8060048 - 5 Jun 2024

Abstract

Driven by technological advances in various fields (AI, 5G, VR, IoT, etc.) together with the emergence of digital twins technologies (HDT, HAL, BIM, etc.), the Metaverse has attracted growing attention from scientific and industrial communities. This interest is due to its potential impact

[...] Read more.

Driven by technological advances in various fields (AI, 5G, VR, IoT, etc.) together with the emergence of digital twins technologies (HDT, HAL, BIM, etc.), the Metaverse has attracted growing attention from scientific and industrial communities. This interest is due to its potential impact on people lives in different sectors such as education or medicine. Specific solutions can also increase inclusiveness of people with disabilities that are an impediment to a fulfilled life. However, security and privacy concerns remain the main obstacles to its development. Particularly, the data involved in the Metaverse can be comprehensive with enough granularity to build a highly detailed digital copy of the real world, including a Human Digital Twin of a person. Existing security countermeasures are largely ineffective and lack adaptability to the specific needs of Metaverse applications. Furthermore, the virtual worlds in a large-scale Metaverse can be highly varied in terms of hardware implementation, communication interfaces, and software, which poses huge interoperability difficulties. This paper aims to analyse the risks and opportunities associated with adopting digital replicas of humans (HDTs) within the Metaverse and the challenges related to managing digital identities in this context. By examining the current technological landscape, we identify several open technological challenges that currently limit the adoption of HDTs and the Metaverse. Additionally, this paper explores a range of promising technologies and methodologies to assess their suitability within the Metaverse context. Finally, two example scenarios are presented in the Medical and Education fields.

Full article

(This article belongs to the Special Issue Designing an Inclusive and Accessible Metaverse)

►▼

Show Figures

Figure 1

Open AccessArticle

Exploring Human Emotions: A Virtual Reality-Based Experimental Approach Integrating Physiological and Facial Analysis

by

Leire Bastida, Sara Sillaurren, Erlantz Loizaga, Eneko Tomé and Ana Moya

Multimodal Technol. Interact. 2024, 8(6), 47; https://doi.org/10.3390/mti8060047 - 4 Jun 2024

Abstract

►▼

Show Figures

This paper researches the classification of human emotions in a virtual reality (VR) context by analysing psychophysiological signals and facial expressions. Key objectives include exploring emotion categorisation models, identifying critical human signals for assessing emotions, and evaluating the accuracy of these signals in

[...] Read more.

This paper researches the classification of human emotions in a virtual reality (VR) context by analysing psychophysiological signals and facial expressions. Key objectives include exploring emotion categorisation models, identifying critical human signals for assessing emotions, and evaluating the accuracy of these signals in VR environments. A systematic literature review was performed through peer-reviewed articles, forming the basis for our methodologies. The integration of various emotion classifiers employs a ‘late fusion’ technique due to varying accuracies among classifiers. Notably, facial expression analysis faces challenges from VR equipment occluding crucial facial regions like the eyes, which significantly impacts emotion recognition accuracy. A weighted averaging system prioritises the psychophysiological classifier over the facial recognition classifiers due to its higher accuracy. Findings suggest that while combined techniques are promising, they struggle with mixed emotional states as well as with fear and trust emotions. The research underscores the potential and limitations of current technologies, recommending enhanced algorithms for effective interpretation of complex emotional expressions in VR. The study provides a groundwork for future advancements, aiming to refine emotion recognition systems through systematic data collection and algorithm optimisation.

Full article

Figure 1

Open AccessArticle

Sound of the Police—Virtual Reality Training for Police Communication for High-Stress Operations

by

Markus Murtinger, Jakob Carl Uhl, Lisa Maria Atzmüller, Georg Regal and Michael Roither

Multimodal Technol. Interact. 2024, 8(6), 46; https://doi.org/10.3390/mti8060046 - 4 Jun 2024

Abstract

Police communication is a field with unique challenges and specific requirements. Police officers depend on effective communication, particularly in high-stress operations, but current training methods are not focused on communication and provide only limited evaluation methods. This work explores the potential of virtual

[...] Read more.

Police communication is a field with unique challenges and specific requirements. Police officers depend on effective communication, particularly in high-stress operations, but current training methods are not focused on communication and provide only limited evaluation methods. This work explores the potential of virtual reality (VR) for enhancing police communication training. The rise of VR training, especially in specific application areas like policing, provides benefits. We conducted a field study during police training to assess VR approaches for training communication. The results show that VR is suitable for communication training if factors such as realism, reflection and repetition are given in the VR system. Trainer feedback shows that assistive systems for evaluation and visualization of communication are highly needed. We present ideas and approaches for evaluation in communication training and concepts for visualization and exploration of the data. This research contributes to improving VR police training and has implications for communication training in VR in challenging contexts.

Full article

(This article belongs to the Special Issue 3D User Interfaces and Virtual Reality)

►▼

Show Figures

Figure 1

Open AccessArticle

What the Mind Can Comprehend from a Single Touch

by

Patrick Coe, Grigori Evreinov, Mounia Ziat and Roope Raisamo

Multimodal Technol. Interact. 2024, 8(6), 45; https://doi.org/10.3390/mti8060045 - 28 May 2024

Abstract

►▼

Show Figures

This paper investigates the versatility of force feedback (FF) technology in enhancing user interfaces across a spectrum of applications. We delve into the human finger pad’s sensitivity to FF stimuli, which is critical to the development of intuitive and responsive controls in sectors

[...] Read more.

This paper investigates the versatility of force feedback (FF) technology in enhancing user interfaces across a spectrum of applications. We delve into the human finger pad’s sensitivity to FF stimuli, which is critical to the development of intuitive and responsive controls in sectors such as medicine, where precision is paramount, and entertainment, where immersive experiences are sought. The study presents a case study in the automotive domain, where FF technology was implemented to simulate mechanical button presses, reducing the JND FF levels that were between 0.04 N and 0.054 N to the JND levels of 0.254 and 0.298 when using a linear force feedback scale and those that were 0.028 N and 0.033 N to the JND levels of 0.074 and 0.164 when using a logarithmic force scale. The results demonstrate the technology’s efficacy and potential for widespread adoption in various industries, underscoring its significance in the evolution of haptic feedback systems.

Full article

Figure 1

Open AccessArticle

A Wearable Bidirectional Human–Machine Interface: Merging Motion Capture and Vibrotactile Feedback in a Wireless Bracelet

by

Julian Kindel, Daniel Andreas, Zhongshi Hou, Anany Dwivedi and Philipp Beckerle

Multimodal Technol. Interact. 2024, 8(6), 44; https://doi.org/10.3390/mti8060044 - 23 May 2024

Abstract

►▼

Show Figures

Humans interact with the environment through a variety of senses. Touch in particular contributes to a sense of presence, enhancing perceptual experiences, and establishing causal relations between events. Many human–machine interfaces only allow for one-way communication, which does not do justice to the

[...] Read more.

Humans interact with the environment through a variety of senses. Touch in particular contributes to a sense of presence, enhancing perceptual experiences, and establishing causal relations between events. Many human–machine interfaces only allow for one-way communication, which does not do justice to the complexity of the interaction. To address this, we developed a bidirectional human–machine interface featuring a bracelet equipped with linear resonant actuators, controlled via a Robot Operating System (ROS) program, to simulate haptic feedback. Further, the wireless interface includes a motion sensor and a sensor to quantify the tightness of the bracelet. Our functional experiments, which compared stimulation with three and five intensity levels, respectively, were performed by four healthy participants in their twenties and thirties. The participants achieved an average accuracy of 88% estimating three vibration intensity levels. While the estimation accuracy for five intensity levels was only 67%, the results indicated a good performance in perceiving relative vibration changes with an accuracy of 82%. The proposed haptic feedback bracelet will facilitate research investigating the benefits of bidirectional human–machine interfaces and the perception of vibrotactile feedback in general by closing the gap for a versatile device that can provide high-density user feedback in combination with sensors for intent detection.

Full article

Figure 1

Open AccessArticle

Exploring the Role of User Experience and Interface Design Communication in Augmented Reality for Education

by

Matina Kiourexidou, Andreas Kanavos, Maria Klouvidaki and Nikos Antonopoulos

Multimodal Technol. Interact. 2024, 8(6), 43; https://doi.org/10.3390/mti8060043 - 22 May 2024

Abstract

►▼

Show Figures

Augmented Reality (AR) enhances learning by integrating interactive and immersive elements that bring content to life, thus increasing motivation and improving retention. AR also supports personalized learning, allowing learners to interact with content at their own pace and according to their preferred learning

[...] Read more.

Augmented Reality (AR) enhances learning by integrating interactive and immersive elements that bring content to life, thus increasing motivation and improving retention. AR also supports personalized learning, allowing learners to interact with content at their own pace and according to their preferred learning styles. This adaptability not only promotes self-directed learning but also empowers learners to take charge of their educational journey. Effective interface design is crucial for these AR applications, requiring careful integration of user interactions and visual cues to blend AR elements seamlessly with reality. This paper explores the impact of AR on user experience within educational settings, examining engagement, motivation, and learning outcomes to determine how AR can enhance the educational experience. Additionally, it addresses design considerations and challenges in develo** AR user interfaces, drawing on current research and best practices to propose effective and adaptable solutions for educational AR applications. As AR technology evolves, its potential to transform educational experiences continues to grow, promising significant advancements in how users interact with, personalize, and immerse themselves in learning content.

Full article

Figure 1

Open AccessArticle

Recall of Odorous Objects in Virtual Reality

by

Jussi Rantala, Katri Salminen, Poika Isokoski, Ville Nieminen, Markus Karjalainen, Jari Väliaho, Philipp Müller, Anton Kontunen, Pasi Kallio and Veikko Surakka

Multimodal Technol. Interact. 2024, 8(6), 42; https://doi.org/10.3390/mti8060042 - 21 May 2024

Abstract

►▼

Show Figures

The aim was to investigate how the congruence of odors and visual objects in virtual reality (VR) affects later memory recall of the objects. Participants (N = 30) interacted with 12 objects in VR. The interaction was varied by odor congruency (i.e., the

[...] Read more.

The aim was to investigate how the congruence of odors and visual objects in virtual reality (VR) affects later memory recall of the objects. Participants (N = 30) interacted with 12 objects in VR. The interaction was varied by odor congruency (i.e., the odor matched the object’s visual appearance, the odor did not match the object’s visual appearance, or the object had no odor); odor quality (i.e., an authentic or a synthetic odor); and interaction type (i.e., participants could look and manipulate or could only look at objects). After interacting with the 12 objects, incidental memory performance was measured with a free recall task. In addition, the participants rated the pleasantness and arousal of the interaction with each object. The results showed that the participants remembered significantly more objects with congruent odors than objects with incongruent odors or odorless objects. Furthermore, interaction with congruent objects was rated significantly more pleasant and relaxed than interaction with incongruent objects. Odor quality and interaction type did not have significant effects on recall or emotional ratings. These results can be utilized in the development of multisensory VR applications.

Full article

Figure 1

Open AccessArticle

User-Centered Evaluation Framework to Support the Interaction Design for Augmented Reality Applications

by

Andrea Picardi and Giandomenico Caruso

Multimodal Technol. Interact. 2024, 8(5), 41; https://doi.org/10.3390/mti8050041 - 14 May 2024

Abstract

►▼

Show Figures

The advancement of Augmented Reality (AR) technology has been remarkable, enabling the augmentation of user perception with timely information. This progress holds great promise in the field of interaction design. However, the mere advancement of technology is not enough to ensure widespread adoption.

[...] Read more.

The advancement of Augmented Reality (AR) technology has been remarkable, enabling the augmentation of user perception with timely information. This progress holds great promise in the field of interaction design. However, the mere advancement of technology is not enough to ensure widespread adoption. The user dimension has been somewhat overlooked in AR research due to a lack of attention to user motivations, needs, usability, and perceived value. The critical aspects of AR technology tend to be overshadowed by the technology itself. To ensure appropriate future assessments, it is necessary to thoroughly examine and categorize all the methods used for AR technology validation. By identifying and classifying these evaluation methods, researchers and practitioners will be better equipped to develop and validate new AR techniques and applications. Therefore, comprehensive and systematic evaluations are critical to the advancement and sustainability of AR technology. This paper presents a theoretical framework derived from a cluster analysis of the most efficient evaluation methods for AR extracted from 399 papers. Evaluation methods were clustered according to the application domains and the human–computer interaction aspects to be investigated. This framework should facilitate rapid development cycles prioritizing user requirements, ultimately leading to groundbreaking interaction methods accessible to a broader audience beyond research and development centers.

Full article

Figure 1

Open AccessArticle

Immersive Virtual Colonography Viewer for Colon Growths Diagnosis: Design and Think-Aloud Study

by

João Serras, Andrew Duchowski, Isabel Nobre, Catarina Moreira, Anderson Maciel and Joaquim Jorge

Multimodal Technol. Interact. 2024, 8(5), 40; https://doi.org/10.3390/mti8050040 - 13 May 2024

Abstract

►▼

Show Figures

Desktop-based virtual colonoscopy is a proven and accurate process for identifying colon abnormalities. However, it is time-consuming. Faster, immersive interfaces for virtual colonoscopy are still incipient and need to be better understood. This article introduces a novel design that leverages VR paradigm components

[...] Read more.

Desktop-based virtual colonoscopy is a proven and accurate process for identifying colon abnormalities. However, it is time-consuming. Faster, immersive interfaces for virtual colonoscopy are still incipient and need to be better understood. This article introduces a novel design that leverages VR paradigm components to enhance the efficiency and effectiveness of immersive analysis. Our approach contributes a novel tool highlighting unseen areas within the colon via eye-tracking, a flexible navigation approach, and a distinct interface for displaying scans blended with the reconstructed colon surface. The path to evaluating and validating such a tool for clinical settings is arduous. This article contributes a formative evaluation using think-aloud sessions with radiology experts and students. Questions related to colon coverage, diagnostic accuracy, and time to complete are analyzed with different user profiles. Although not aimed at quantitatively measuring performance, the experiment provides lessons learned to guide other researchers in the field.

Full article

Figure 1

Open AccessArticle

Design and Validation of a Computational Thinking Test for Children in the First Grades of Elementary Education

by

Jorge Hernán Aristizábal Zapata, Julián Esteban Gutiérrez Posada and Pascual D. Diago

Multimodal Technol. Interact. 2024, 8(5), 39; https://doi.org/10.3390/mti8050039 - 9 May 2024

Abstract

►▼

Show Figures

Computational thinking (CT) has garnered significant interest in both computer science and education sciences as it delineates a set of skills that emerge during the problem-solving process. Consequently, numerous assessment instruments aimed at measuring CT have been developed in the recent years. However,

[...] Read more.

Computational thinking (CT) has garnered significant interest in both computer science and education sciences as it delineates a set of skills that emerge during the problem-solving process. Consequently, numerous assessment instruments aimed at measuring CT have been developed in the recent years. However, a scarce part of the existing CT measurement instruments has been dedicated to early school ages, and few have undergone rigorous validation or reliability testing. Therefore, this work introduces a new instrument for measuring CT in the early grades of elementary education: the Computational Thinking Test for Children (CTTC). To this end, in this work, we provide the design and validation of the CTTC, which is constructed around spatial, sequential, and logical thinking and encompasses abstraction, decomposition, pattern recognition, and coding items organized in five question blocks. The validation and standardization process employs the Kuder–Richardson statistic (KR-20) and expert judgment using V-Aiken for consistency. Additionally, item difficulty indices were utilized to gauge the difficulty level of each question in the CTTC. The study concludes that the CTTC demonstrates consistency and suitability for children in the first cycle of primary education (encompassing the first to third grades).

Full article

Figure 1

Open AccessReview

A Narrative Review of the Sociotechnical Landscape and Potential of Computer-Assisted Dynamic Assessment for Children with Communication Support Needs

by

Christopher S. Norrie, Stijn R. J. M. Deckers, Maartje Radstaake and Hans van Balkom

Multimodal Technol. Interact. 2024, 8(5), 38; https://doi.org/10.3390/mti8050038 - 7 May 2024

Abstract

This paper presents a narrative review of the current practices in assessing learners’ cognitive abilities and the limitations of traditional intelligence tests in capturing a comprehensive understanding of a child’s learning potential. Referencing prior research, it explores the concept of dynamic assessment (DA)

[...] Read more.

This paper presents a narrative review of the current practices in assessing learners’ cognitive abilities and the limitations of traditional intelligence tests in capturing a comprehensive understanding of a child’s learning potential. Referencing prior research, it explores the concept of dynamic assessment (DA) as a promising yet underutilised alternative that focuses on a child’s responsiveness to learning opportunities. The paper highlights the potential of novel technologies, in particular tangible user interfaces (TUIs), in integrating computational science with DA to improve the access and accuracy of assessment results, especially for children with communication support needs (CSN), as a catalyst for abetting critical communicative competencies. However, existing research in this area has mainly focused on the automated mediation of DA, neglecting the human element that is crucial for effective solutions in special education. A framework is proposed to address these issues, combining pedagogical and sociocultural elements alongside adaptive information technology solutions in an assessment system informed by user-centred design principles to fully support teachers/facilitators and learners with CSN within the special education ecosystem.

Full article

(This article belongs to the Special Issue Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

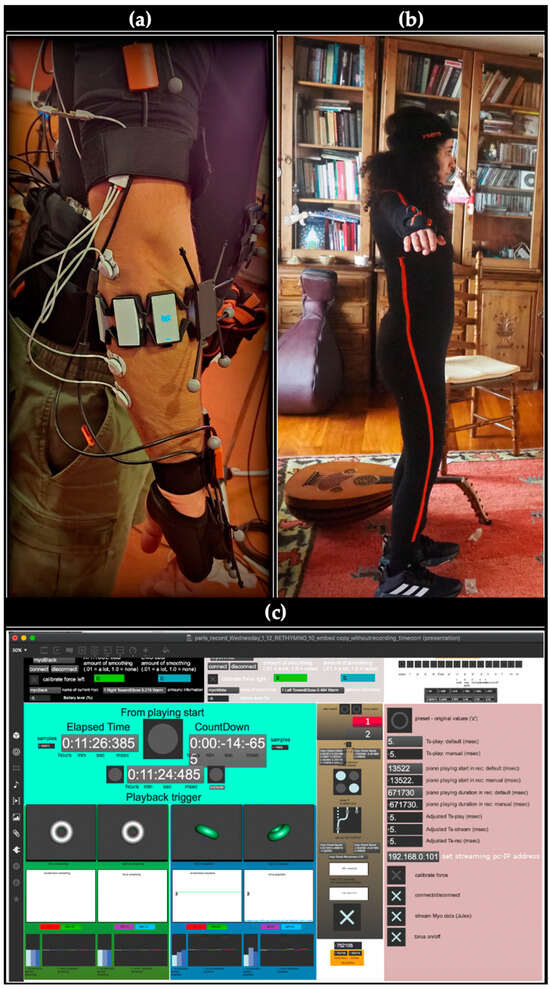

Multimodal Embodiment Research of Oral Music Traditions: Electromyography in Oud Performance and Education Research of Persian Art Music

by

Stella Paschalidou

Multimodal Technol. Interact. 2024, 8(5), 37; https://doi.org/10.3390/mti8050037 - 7 May 2024

Abstract

With the recent advent of research focusing on the body’s significance in music, the integration of physiological sensors in the context of empirical methodologies for music has also gained momentum. Given the recognition of covert muscular activity as a strong indicator of musical

[...] Read more.

With the recent advent of research focusing on the body’s significance in music, the integration of physiological sensors in the context of empirical methodologies for music has also gained momentum. Given the recognition of covert muscular activity as a strong indicator of musical intentionality and the previously ascertained link between physical effort and various musical aspects, electromyography (EMG)—signals representing muscle activity—has also experienced a noticeable surge. While EMG technologies appear to hold good promise for sensing, capturing, and interpreting the dynamic properties of movement in music, which are considered innately linked to artistic expressive power, they also come with certain challenges, misconceptions, and predispositions. The paper engages in a critical examination regarding the utilisation of muscle force values from EMG sensors as indicators of physical effort and musical activity, particularly focusing on (the intuitively expected link to) sound levels. For this, it resides upon empirical work, namely practical insights drawn from a case study of music performance (Persian instrumental music) in the context of a music class. The findings indicate that muscle force can be explained by a small set of (six) statistically significant acoustic and movement features, the latter captured by a state-of-the-art (full-body inertial) motion capture system. However, no straightforward link to sound levels is evident.

Full article

(This article belongs to the Special Issue Multimodal Interaction in Education)

►▼

Show Figures

Figure 1

Open AccessArticle

Saliency-Guided Point Cloud Compression for 3D Live Reconstruction

by

Pietro Ruiu, Lorenzo Mascia and Enrico Grosso

Multimodal Technol. Interact. 2024, 8(5), 36; https://doi.org/10.3390/mti8050036 - 3 May 2024

Cited by 2

Abstract

►▼

Show Figures

3D modeling and reconstruction are critical to creating immersive XR experiences, providing realistic virtual environments, objects, and interactions that increase user engagement and enable new forms of content manipulation. Today, 3D data can be easily captured using off-the-shelf, specialized headsets; very often, these

[...] Read more.

3D modeling and reconstruction are critical to creating immersive XR experiences, providing realistic virtual environments, objects, and interactions that increase user engagement and enable new forms of content manipulation. Today, 3D data can be easily captured using off-the-shelf, specialized headsets; very often, these tools provide real-time, albeit low-resolution, integration of continuously captured depth maps. This approach is generally suitable for basic AR and MR applications, where users can easily direct their attention to points of interest and benefit from a fully user-centric perspective. However, it proves to be less effective in more complex scenarios such as multi-user telepresence or telerobotics, where real-time transmission of local surroundings to remote users is essential. Two primary questions emerge: (i) what strategies are available for achieving real-time 3D reconstruction in such systems? and (ii) how can the effectiveness of real-time 3D reconstruction methods be assessed? This paper explores various approaches to the challenge of live 3D reconstruction from typical point cloud data. It first introduces some common data flow patterns that characterize virtual reality applications and shows that achieving high-speed data transmission and efficient data compression is critical to maintaining visual continuity and ensuring a satisfactory user experience. The paper thus introduces the concept of saliency-driven compression/reconstruction and compares it with alternative state-of-the-art approaches.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Information, Mathematics, MTI, Symmetry

Youth Engagement in Social Media in the Post COVID-19 Era

Topic Editors: Naseer Abbas Khan, Shahid Kalim Khan, Abdul QayyumDeadline: 30 September 2024

Conferences

Special Issues

Special Issue in

MTI

Multimodal Interaction in Education

Guest Editor: Wajeeh DaherDeadline: 20 August 2024

Special Issue in

MTI

Effectiveness of Serious Games in Risk Communication of Natural Disasters

Guest Editors: Pedro Albuquerque Santos, Maria Ana Viana-Baptista, Rui JesusDeadline: 20 September 2024

Special Issue in

MTI

Innovative Theories and Practices for Designing and Evaluating Inclusive Educational Technology and Online Learning

Guest Editor: Julius NganjiDeadline: 30 September 2024

Special Issue in

MTI

Cooperative Intelligence in Automated Driving- 2nd Edition

Guest Editors: Myounghoon Jeon (Philart), Ronald Schroeter, Andreas RienerDeadline: 20 October 2024